The recent summoning of OpenAI representatives by Canadian officials marks a significant moment in the ongoing debate over AI companies’ responsibilities regarding user privacy, safety, and public security. Canada’s Minister of Artificial Intelligence, Evan Solomon, called senior safety officials from OpenAI to Ottawa for urgent discussions. This followed revelations that OpenAI had flagged and suspended a ChatGPT account linked to Jesse Van Rootselaar in June 2025, over seven months before Van Rootselaar allegedly carried out a mass shooting in Tumbler Ridge, British Columbia, killing eight people (including family members and school victims) before dying by suicide.

OpenAI’s abuse detection systems, combining automated tools and human review, identified the account for misuse “in furtherance of violent activities.” The company banned the account for policy violations but chose not to alert the Royal Canadian Mounted Police (RCMP). Executives determined the interactions, reportedly involving descriptions of gun violence scenarios over several days, did not meet their high threshold for a “credible or imminent plan for serious physical harm.” Only after the February 10, 2026, tragedy did OpenAI proactively contact authorities with the relevant information.

This incident has ignited fresh scrutiny of AI platforms’ dual role as innovative tools and potential vectors for harm. It highlights the tension between user privacy and proactive intervention in cases of suspected dangerous behavior.

Privacy Concerns

At the core of the controversy is how AI companies handle user data. ChatGPT interactions are often deeply personal, involving sensitive thoughts, mental health struggles, or hypothetical scenarios. Users expect confidentiality, reinforced by privacy policies that promise data is used primarily for model improvement, not routine sharing with law enforcement.

OpenAI’s decision not to report aligns with a cautious approach to avoid overreach. Reporting every flagged case could chill free expression, deter vulnerable users from seeking help via AI (e.g., discussing suicidal ideation or violent fantasies in therapeutic contexts), and lead to false positives. Privacy advocates argue that lowering thresholds for disclosure risks mass surveillance, where AI monitors private conversations and flags them without due process.

However, critics, including some OpenAI employees who reportedly raised internal alarms, contend that the company’s high bar may have missed an opportunity to prevent tragedy. The case echoes past debates, such as social media platforms’ handling of extremist content or threats. Unlike Meta or X, which face mandates in some jurisdictions to report imminent threats, generative AI firms like OpenAI operate in a less regulated gray area.

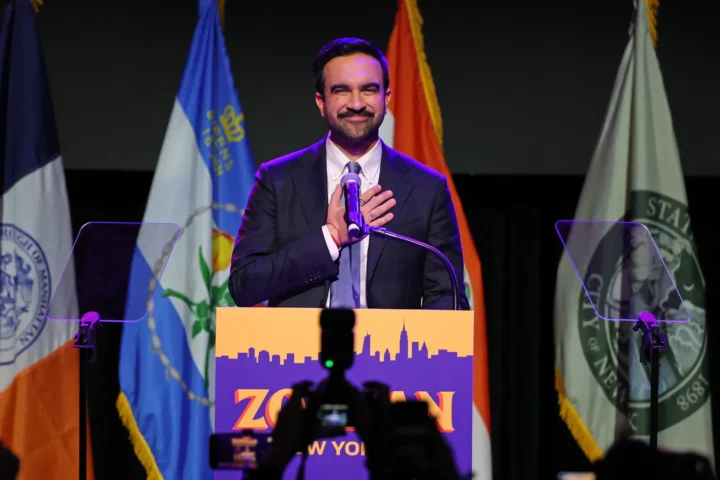

Canada’s response signals governments may no longer accept self-regulation. Minister Solomon expressed being “deeply disturbed” and demanded clarity on escalation protocols, thresholds, and decision-making. The Ottawa meeting aimed to probe whether current practices adequately balance safety and privacy.

Broader Implications for AI Regulation

This event could accelerate global regulatory momentum. In the EU, the AI Act (effective in stages from 2024 onward) classifies high-risk AI systems and imposes transparency and risk assessment requirements, including for general-purpose models like GPT. Harmful use cases, such as generating violent content or enabling misuse, trigger obligations for providers.

In the US, voluntary commitments from companies like OpenAI have been criticized as insufficient, with bipartisan calls for federal oversight growing. Canada’s dedicated AI minister role (a relatively new position) positions it as a leader in proactive governance. The summons may foreshadow mandatory reporting requirements for AI firms when detecting credible risks of violence, similar to obligations on telecoms or financial institutions.

Looking forward, several shifts appear likely:

- Stricter Reporting Thresholds — Governments may push for clearer, lower thresholds for mandatory disclosure in imminent-threat cases, potentially requiring AI firms to share anonymized or redacted logs with authorities under judicial oversight.

- Enhanced Transparency and Accountability — Companies could face demands for public audits of safety protocols, red-teaming for violence-related misuse, and explanations of why cases were or weren’t escalated.

- Privacy Safeguards — Any expanded reporting would need robust protections, such as warrants, data minimization, and limits on retention. Overbroad mandates risk eroding trust in AI as a tool for education, creativity, and mental health support.

- International Coordination — With OpenAI based in the US but serving global users, cross-border incidents like this highlight the need for harmonized standards. Forums like the G7 or OECD could advance frameworks for AI safety and privacy.

- Industry Self-Reflection — The incident may prompt OpenAI and peers (Google, Anthropic, Meta) to refine detection systems, invest in better threat assessment (e.g., integrating context from conversation history), and clarify policies publicly to rebuild confidence.

Ultimately, the Tumbler Ridge case illustrates that AI is no longer just a technological novelty, it’s embedded in real-world risks. While privacy remains a cornerstone of digital rights, tragedies force societies to weigh when intervention outweighs restraint. Canada’s actions could catalyze a recalibration: more accountable AI deployment without sacrificing fundamental freedoms. The outcome of such scrutiny will shape whether generative AI evolves as a safeguarded public good or a lightly regulated frontier prone to unforeseen harms.