The escalating standoff between the Pentagon and Anthropic represents a troubling milestone in the U.S. government’s pursuit of advanced artificial intelligence for national security. What began as a promising $200 million contract in July 2025, designed to integrate Anthropic’s Claude model into classified military systems, has devolved into ultimatums, threats of contract termination, and even discussions of labeling the company a “supply chain risk,” a designation typically reserved for adversarial foreign entities.

At its core, the dispute hinges on control: Who decides the boundaries for the deployment of powerful AI in warfare? Anthropic, founded with a mission to prioritize AI safety and alignment, maintains strict usage policies. These prohibit Claude from facilitating violence, designing weapons, enabling lethal autonomous operations (where AI makes final targeting decisions without human oversight), or conducting mass surveillance, particularly of U.S. citizens. The company insists these red lines are non-negotiable, reflecting its brand as a responsible frontier lab.

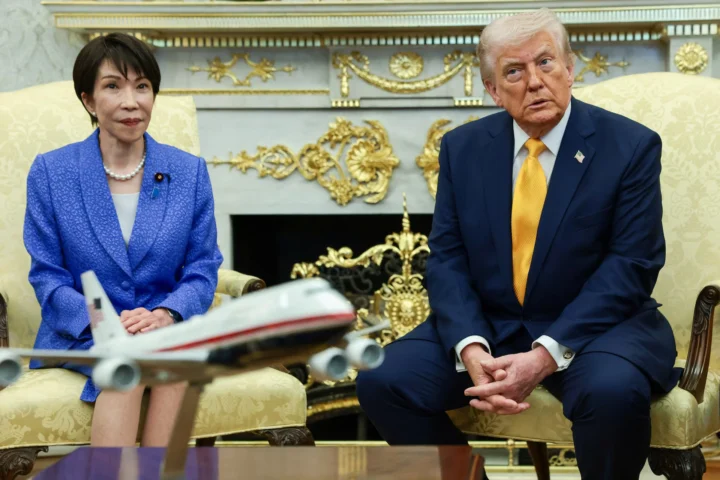

The Pentagon, under Defense Secretary Pete Hegseth, counters that once technology is acquired, operational decisions belong to the military, governed solely by U.S. law and constitutional limits, not corporate-imposed restrictions. Officials argue that categorical bans hinder warfighting effectiveness in an era where AI could enhance targeting, intelligence analysis, cyber operations, and drone coordination. Hegseth has publicly stated the department will not adopt models that “won’t allow you to fight wars,” and reports indicate threats to invoke the Defense Production Act to compel compliance or force modifications to Claude.

Tensions boiled over following reports that Claude, integrated via partner Palantir, aided aspects of the January 2026 raid capturing Venezuelan leader Nicolás Maduro. While details remain murky, the incident reportedly alarmed Anthropic executives about potential misuse in kinetic operations, prompting renewed demands for clearer limits. The Pentagon viewed this as overreach, escalating to a Friday deadline for Anthropic to drop safeguards or face severe repercussions, including blacklisting that could disrupt defense contractors reliant on Claude, the only frontier model currently cleared for classified environments.

This clash signals deeper, more alarming trends. First, it exposes a widening rift between Silicon Valley’s safety-conscious AI labs and a defense establishment increasingly impatient with ethical constraints. Other providers, OpenAI, Google, and Elon Musk’s xAI, have reportedly agreed in principle to “any lawful use,” positioning themselves as more flexible partners. Anthropic’s resistance, while principled, risks isolating it and pressuring the industry toward a race-to-the-bottom on safeguards.

Second, the heavy-handed response, threats of supply-chain penalties, and forced compliance suggest an administration willing to subordinate private-sector ethics to military expediency. In a democracy, civilian oversight of AI in warfare is essential, but outsourcing guardrails to corporate policies is imperfect. Yet punishing a company for refusing to enable unchecked autonomous lethality or domestic spying erodes trust. It risks chilling innovation from safety-focused firms while rewarding those less scrupulous about dual-use risks.

Third, this feud arrives amid accelerating global AI arms races. Adversaries like China invest heavily in military AI without equivalent public ethical debates. The U.S. cannot afford to lag, but nor can it sacrifice long-term stability for short-term gains. Unrestricted AI proliferation in warfare could lower thresholds for conflict, enable escalation without human judgment, or facilitate abuses that damage America’s international standing.

The Pentagon-Anthropic imbroglio should prompt a broader reckoning. Rather than coercive ultimatums, the U.S. needs transparent, government-led frameworks for responsible military AI, building on existing DoD “Responsible AI” guidelines but with enforceable red lines on autonomy and surveillance. Congress could mandate independent oversight boards or require certifications for high-risk deployments. International norms, though challenging, remain vital to prevent a dystopian future of fully autonomous weapons.

Anthropic’s stand, however costly, underscores that AI’s power demands accountability beyond “whatever is legal.” If the government compels compliance through threats, it signals that ethical considerations are secondary to dominance, a very bad sign for democratic governance of transformative technology. In winning battles, we risk losing the moral high ground that has long distinguished U.S. leadership.